Mapnik 4.2.1 Release Jan 28, 2026 | Artem Pavlenko

Mapnik 4.2.0 Release Dec 30, 2025 | Artem Pavlenko

Mapnik 4.1.4 Release Nov 06, 2025 | Artem Pavlenko

Mapnik 4.1.3 Release Oct 01, 2025 | Artem Pavlenko

Mapnik 4.1.2 Release Aug 03, 2025 | Artem Pavlenko

Mapnik 4.1.1 Release Jun 27, 2025 | Artem Pavlenko

Mapnik 4.1.0 Release Jun 01, 2025 | Artem Pavlenko

Mapnik 4.0.7 Release Apr 05, 2025 | Artem Pavlenko

Mapnik 4.0.6 Release Mar 02, 2025 | Artem Pavlenko

Mapnik 4.0.5 Release Jan 31, 2025 | Artem Pavlenko

Mapnik 4.0.4 Release Dec 04, 2024 | Artem Pavlenko

latest news

MCS01 Roundup - Faster Mapnik

Sep 29, 2010

The first international Mapnik code sprint wrapped up in London and San Francisco over the weekend. It was an amazing event, with new friendships made, new features discussed, and old bugs squashed.

I'm going to be reporting on various highlights as time permits and as I gather my thoughts. This first post details some performance improvements made to Mapnik on the first day of the sprint.

Faster Mapnik

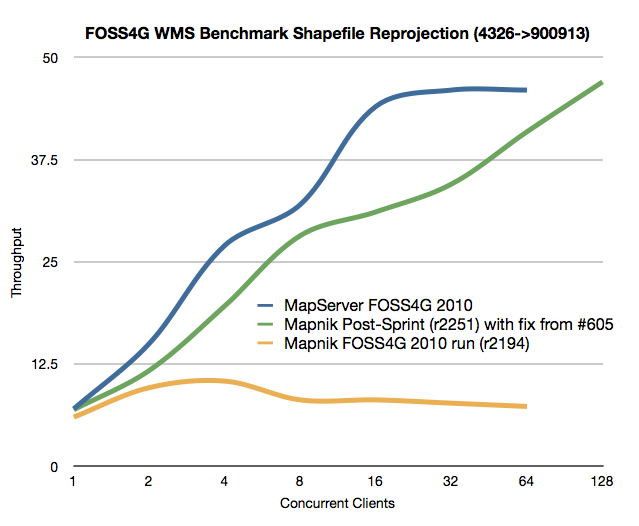

Following the Mapnik project's first year of participation in the FOSS4G WMS Shootout, we learned that Mapnik is surprisingly fast, given that we've not yet done a lot of profiling and most focus of late has been on advanced cartography. We also learned that the new multi-threaded C++ Paleoserver Dane wrote for the shootout scales linearly.

Room for improvement

But there was one benchmark that Mapnik did very poorly in: on-the-fly reprojection of shapefiles. Basically the test requires requests in Google Mercator and data to be pulled in EPSG:4326. This causes Mapnik, for each and every vertex of every geometry, to call out to proj.4 for transformation from source to destination srs. And because Proj.4 is not threadsafe in Mapnik this requires a threadlock for every single call to Proj.4. Because the Paleoserver model is one process, many threads, this kind of locking has a much more adverse impact than for multiprocess servers.

But, we knew that the latest trunk of Proj.4 has some new threadsafe support. Tom Hughes took a closer look at this on the first afternoon of the sprint, and based on the new support for 'projCtx' he enhanced Mapnik to avoid unecessary thread locking when built against Proj.4 version 4.8 (current trunk). The result is just what the team was hoping for and nothing short of awesome.

The above graph is a result of re-running the latest Mapnik (with the fix from #605) on the official FOSS4G WMS benchmarking server. The blue line is MapServer's original result, and the chartreuse line is Mapnik's, while the green line shows how much faster Mapnik is after Tom's fix (both runs using Paleoserver). The green line also shows the Paleoserver throughput at 128 concurrent clients (higher load) which the original tests stopped short of (I plan to do more tests with even greater load to see if or where the Paleoserver stops being able to scale linearly).